Carrera 290

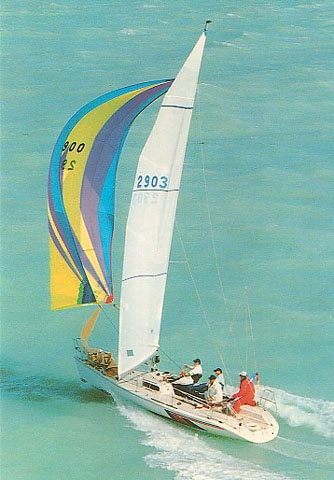

The carrera 290 is a 29.17ft fractional sloop designed by håkan södergren and built in fiberglass since 1992..

The Carrera 290 is an ultralight sailboat which is a very high performer. It is very stable / stiff and has a low righting capability if capsized. It is best suited as a racing boat.

Carrera 290 for sale elsewhere on the web:

Main features

| Model | Carrera 290 | ||

| Length | 29.17 ft | ||

| Beam | 9.45 ft | ||

| Draft | 5.57 ft | ||

| Country | ?? | ||

| Estimated price | $ 0 | ?? |

Login or register to personnalize this screen.

You will be able to pin external links of your choice.

See how Sailboatlab works in video

| Sail area / displ. | 32.68 | ||

| Ballast / displ. | 45.08 % | ||

| Displ. / length | 67.51 | ||

| Comfort ratio | 8.29 | ||

| Capsize | 2.64 |

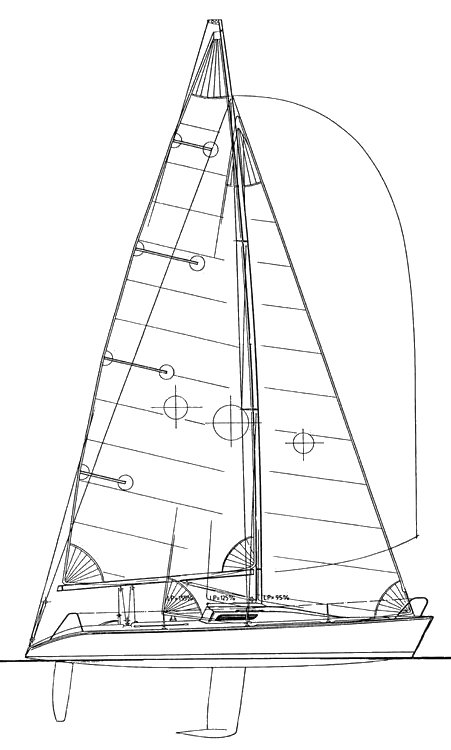

| Hull type | Monohull fin keel with bulb and spade rudder | ||

| Construction | Fiberglass | ||

| Waterline length | 26.92 ft | ||

| Maximum draft | 5.57 ft | ||

| Displacement | 2950 lbs | ||

| Ballast | 1330 lbs | ||

| Hull speed | 6.95 knots |

We help you build your own hydraulic steering system - Lecomble & Schmitt

| Rigging | Fractional Sloop | ||

| Sail area (100%) | 419 sq.ft | ||

| Air draft | 0 ft | ?? | |

| Sail area fore | 186.82 sq.ft | ||

| Sail area main | 232.42 sq.ft | ||

| I | 34.50 ft | ||

| J | 10.83 ft | ||

| P | 35.43 ft | ||

| E | 13.12 ft |

| Nb engines | 1 | ||

| Total power | 0 HP | ||

| Fuel capacity | 0 gals |

Accommodations

| Water capacity | 0 gals | ||

| Headroom | 0 ft | ||

| Nb of cabins | 0 | ||

| Nb of berths | 0 | ||

| Nb heads | 0 |

Builder data

| Builder | ?? | ||

| Designer | Håkan Södergren | ||

| First built | 1992 | ||

| Last built | 0 | ?? | |

| Number built | 0 | ?? |

Other photos

Modal Title

The content of your modal.

Personalize your sailboat data sheet

- Register / Login

A password will be e-mailed to you

Reset Password

CARRERA 290

More information, image gallery, floor plans.

Use the form below to contact us!

| |

37 Facts About NovosibirskWritten by Adelice Lindemann Modified & Updated: 25 Jun 2024 Reviewed by Sherman Smith  Novosibirsk, often referred to as the “Capital of Siberia,” is a vibrant and dynamic city located in southwestern Russia. With a population exceeding 1.5 million residents, it is the third most populous city in Russia and serves as the administrative center of the Novosibirsk Oblast. Nestled along the banks of the Ob River, Novosibirsk is renowned for its rich cultural heritage, scientific advancements, and picturesque landscapes. As the largest city in Siberia, it offers a perfect blend of modern and traditional attractions, making it a fascinating destination for both locals and tourists. In this article, we will delve into 37 interesting facts about Novosibirsk, shedding light on its history, architecture, natural wonders, and cultural significance. Whether you are planning a visit or simply curious about this intriguing city, these facts will give you a deeper understanding of what Novosibirsk has to offer. Key Takeaways:

Novosibirsk is the third-largest city in Russia.Situated in southwestern Siberia, Novosibirsk has a population of over 1.6 million people, making it one of the largest and most vibrant cities in the country. The city was founded in 1893.Novosibirsk was established as a railway junction on the Trans-Siberian Railway, playing a significant role in the development of Siberia. It is known as the “Capital of Siberia”.Due to its economic and cultural significance, Novosibirsk is often referred to as the capital of Siberia. Novosibirsk is a major industrial center.The city is home to a wide range of industries, including machinery manufacturing, chemical production, energy, and metallurgy . It is famous for its scientific and research institutions.Novosibirsk hosts several renowned scientific and research institutions, contributing to advancements in various fields including nuclear physics, chemistry, and biotechnology. The Novosibirsk Opera and Ballet Theatre is one of the largest in Russia.This iconic cultural institution showcases world-class ballet and opera performances and is a must-visit for art enthusiasts visiting the city . The city has a vibrant theater scene.Novosibirsk boasts numerous theaters, showcasing a wide variety of performances from traditional plays to experimental productions. Novosibirsk is a major transportation hub.Thanks to its strategic location on the Trans-Siberian Railway, the city serves as a crucial transportation hub connecting Siberia with other regions of Russia . The Ob River flows through Novosibirsk.The majestic Ob River adds to the city’s natural beauty and provides opportunities for recreational activities such as boating and fishing. Novosibirsk is known for its harsh winter climate.With temperatures dropping well below freezing in winter, the city experiences a true Siberian winter with snowy landscapes. The Novosibirsk Zoo is one of the largest and oldest in Russia.Home to a wide variety of animal species, including rare and endangered ones, the Novosibirsk Zoo attracts visitors from near and far. Novosibirsk is a center for academic excellence.The city is home to Novosibirsk State University, one of the top universities in Russia, renowned for its research and education programs. The Novosibirsk Metro is the newest metro system in Russia.Opened in 1985, the Novosibirsk Metro provides efficient transportation for residents and visitors alike. Novosibirsk is surrounded by picturesque nature.Surrounded by stunning landscapes, including the Altai Mountains and the Novosibirsk Reservoir, the city offers numerous opportunities for outdoor activities. The Novosibirsk State Circus is famous for its performances.Showcasing talented acrobats , clowns, and animal acts, the Novosibirsk State Circus offers entertaining shows for all ages. Novosibirsk is home to a thriving art scene.The city is dotted with art galleries, showcasing the works of local and international artists . Novosibirsk has a diverse culinary scene.From traditional Russian cuisine to international flavors, the city offers a wide range of dining options to satisfy all taste buds. The Novosibirsk State Museum of Local History is a treasure trove of historical artifacts.Exploring the museum gives visitors an insight into the rich history and culture of the region. Novosibirsk is known for its vibrant nightlife.The city is home to numerous bars, clubs, and entertainment venues, ensuring a lively atmosphere after dark. Novosibirsk has a strong ice hockey tradition.Ice hockey is a popular sport in the city, with local teams competing in national and international tournaments. The Novosibirsk State Philharmonic Hall hosts world-class musical performances.Music lovers can enjoy classical concerts and symphony orchestra performances in this renowned venue. Novosibirsk is home to the Akademgorodok, a scientific research town.Akademgorodok is a unique scientific community located near Novosibirsk, housing numerous research institutes and academic organizations. Novosibirsk has a unique blend of architectural styles.The city features a mix of Soviet-era buildings, modern skyscrapers, and historic structures, creating an eclectic cityscape. Novosibirsk is an important center for ballet training and education.The city’s ballet schools and academies attract aspiring dancers from across Russia and abroad. Novosibirsk is a gateway to the stunning Altai Mountains.Located nearby, the Altai Mountains offer breathtaking landscapes, hiking trails, and opportunities for outdoor adventures. Novosibirsk hosts various cultural festivals throughout the year.From music and theater festivals to art exhibitions, the city’s cultural calendar is always packed with exciting events. Novosibirsk is a green city with numerous parks and gardens.Residents and visitors can enjoy the beauty of nature in the city’s well-maintained parks and botanical gardens. Novosibirsk is a center for technology and innovation.The city is home to several technology parks and innovation centers, fostering the development of cutting-edge technologies. Novosibirsk has a strong sense of community.The residents of Novosibirsk are known for their hospitality and friendly nature, making visitors feel welcome. Novosibirsk is a paradise for shopping enthusiasts.The city is dotted with shopping malls, boutiques, and markets, offering a wide range of shopping options. Novosibirsk has a rich literary heritage.The city has been home to many famous Russian writers and poets, and their works are celebrated in literary circles. Novosibirsk is a popular destination for medical tourism.The city is known for its advanced medical facilities and expertise, attracting patients from around the world. Novosibirsk has a well-developed public transportation system.With buses, trams, trolleybuses, and the metro, getting around the city is convenient and efficient. Novosibirsk is a city of sport.The city has a strong sports culture, with numerous sports facilities and opportunities for athletic activities . Novosibirsk has a thriving IT and tech industry.The city is home to numerous IT companies and startups, contributing to the development of the digital economy. Novosibirsk celebrates its anniversary every year on July 12th.The city comes alive with festivities, including concerts, fireworks, and cultural events, to commemorate its foundation. Novosibirsk offers a high quality of life.With its excellent educational and healthcare systems, cultural amenities, and vibrant community, Novosibirsk provides a great living environment for its residents. Novosibirsk is a fascinating city filled with rich history, stunning architecture, and a vibrant cultural scene. From its origins as a small village to becoming the third-largest city in Russia, Novosibirsk has emerged as a major economic and cultural hub in Siberia . With its world-class universities, theaters, museums, and natural attractions, Novosibirsk offers a myriad of experiences for visitors. Whether you’re exploring the impressive Novosibirsk Opera and Ballet Theater, strolling along the picturesque banks of the Ob River, or immersing yourself in the city’s scientific and technological achievements at the Akademgorodok, Novosibirsk has something for everyone. From its iconic landmarks such as the Alexander Nevsky Cathedral to its vibrant festivals like the International Jazz Festival , Novosibirsk has a unique charm that will captivate any traveler. So, make sure to include Novosibirsk in your travel itinerary and discover the hidden gems of this remarkable city. Q: What is the population of Novosibirsk? A: As of 2021, the estimated population of Novosibirsk is around 1.6 million people. Q: Is Novosibirsk a safe city to visit? A: Novosibirsk is generally considered a safe city for tourists. However, it is always recommended to take standard precautions such as avoiding unfamiliar areas at night and keeping your belongings secure. Q: What is the best time to visit Novosibirsk? A: The best time to visit Novosibirsk is during the summer months of June to September when the weather is pleasant and suitable for outdoor activities. However, if you enjoy the winter chill and snow, visiting during the winter season can also be a unique experience. Q: Are there any interesting cultural events in Novosibirsk? A: Yes, Novosibirsk is known for its vibrant cultural scene. The city hosts various festivals throughout the year, including the International Jazz Festival, Novosibirsk International Film Festival, and the Siberian Ice March Festival. Q: Can I visit Novosibirsk without knowing Russian? A: While knowing some basic Russian phrases can be helpful, many establishments in Novosibirsk, especially tourist areas, have English signage and staff who can communicate in English. However, learning a few essential Russian phrases can enhance your travel experience. Novosibirsk's captivating history and vibrant culture make it a must-visit destination for any traveler. From its humble beginnings as a small settlement to its current status as Russia's third-largest city, Novosibirsk has a story worth exploring. If you're a sports enthusiast, don't miss the opportunity to learn more about the city's beloved football club , FC Sibir Novosibirsk. With its rich heritage and passionate fan base, the club has become an integral part of Novosibirsk's identity. Was this page helpful?Our commitment to delivering trustworthy and engaging content is at the heart of what we do. Each fact on our site is contributed by real users like you, bringing a wealth of diverse insights and information. To ensure the highest standards of accuracy and reliability, our dedicated editors meticulously review each submission. This process guarantees that the facts we share are not only fascinating but also credible. Trust in our commitment to quality and authenticity as you explore and learn with us. Share this Fact: Great choice! Your favorites are temporarily saved for this session. Sign in to save them permanently, access them on any device, and receive relevant alerts.

1994 Carrera 290

Seller's DescriptionFast fun racer. Retractable Bow Sprit Pole 3 Main sails 3 Spinnakers 5 head sails Harken wrenches New Gauges New non slip Deck paint Equipment: 6 HP Nissan VHM Marine Radio Raymarine Gauges Wind Speed/Direction Speedometer Depth Boss Stereo Trailer included in great shape Rig and SailsAuxilary power, accomodations, calculations. The theoretical maximum speed that a displacement hull can move efficiently through the water is determined by it's waterline length and displacement. It may be unable to reach this speed if the boat is underpowered or heavily loaded, though it may exceed this speed given enough power. Read more. Classic hull speed formula: Hull Speed = 1.34 x √LWL Max Speed/Length ratio = 8.26 ÷ Displacement/Length ratio .311 Hull Speed = Max Speed/Length ratio x √LWL Sail Area / Displacement RatioA measure of the power of the sails relative to the weight of the boat. The higher the number, the higher the performance, but the harder the boat will be to handle. This ratio is a "non-dimensional" value that facilitates comparisons between boats of different types and sizes. Read more. SA/D = SA ÷ (D ÷ 64) 2/3

Ballast / Displacement RatioA measure of the stability of a boat's hull that suggests how well a monohull will stand up to its sails. The ballast displacement ratio indicates how much of the weight of a boat is placed for maximum stability against capsizing and is an indicator of stiffness and resistance to capsize. Ballast / Displacement * 100 Displacement / Length RatioA measure of the weight of the boat relative to it's length at the waterline. The higher a boat’s D/L ratio, the more easily it will carry a load and the more comfortable its motion will be. The lower a boat's ratio is, the less power it takes to drive the boat to its nominal hull speed or beyond. Read more. D/L = (D ÷ 2240) ÷ (0.01 x LWL)³

Comfort RatioThis ratio assess how quickly and abruptly a boat’s hull reacts to waves in a significant seaway, these being the elements of a boat’s motion most likely to cause seasickness. Read more. Comfort ratio = D ÷ (.65 x (.7 LWL + .3 LOA) x Beam 1.33 )

Capsize Screening FormulaThis formula attempts to indicate whether a given boat might be too wide and light to readily right itself after being overturned in extreme conditions. Read more. CSV = Beam ÷ ³√(D / 64) This listing is presented by SailboatListings.com . Visit their website for more information or to contact the seller. View on SailboatListings.com Embed this page on your own website by copying and pasting this code.

©2024 Sea Time Tech, LLC This site is protected by reCAPTCHA and the Google Privacy Policy and Terms of Service apply. essay impact of technology on our healthEssay on the impact of technology on health care. Technology has grown to become an integral part of health. Healthcare organizations in different parts of the world are using technology to monitor their patients’ progress while others are using technology to store patients’ data (Bonato 37). Patient outcomes have improved due to technology, and health organizations that sought profits have significantly increased their income because of technology. It is no doubt that technology has influenced medical services in varied ways. Therefore, it would be fair to conclude that technology has positively affected healthcare. First, technology has improved access to medical information and data (Mettler 33). One of the most significant advantages triggered by technology is the ability to store and access patient data. Medical professionals can now track patients’ progress by retrieving data from anywhere. At the same time, the internet has allowed doctors to share medical information rapidly amongst themselves, an instance that leads to more efficient patient care. Second, technology has allowed clinicians to gather big data in a limited time (Chen et al. 72). Digital technology allows instant data collection for professionals engaged in epidemiological studies, clinical trials, and those in research. The collection of data, in this case, allows for meta-analysis and permits healthcare organizations to stay on top of cutting edge technological trends. In addition to allowing quick access to medical data and big data technology has improved medical communication (Free et al. 54). Communication is a critical part of healthcare; nurses and doctors must communicate in real-time, and technology allows this instance to happen. Also, healthcare professionals can today make their videos, webinars and use online platforms to communicate with other professionals in different parts of the globe. Technology has revolutionized how health care services are rendered. But apart from improving healthcare, critics argue that technology has increased or added extra jobs for medical professionals (de Belvis et al. 11). Physicians need to have excellent clinical skills and knowledge of the human body. Today, they are forced to have knowledge of both the human body and technology, which makes it challenging for others. Technology has also improved access to data, and this has allowed physicians to study and understand patients’ medical history. Nevertheless, these instances have opened doors to unethical activities such as computer hacking (de Belvis et al. 13). Today patients risk losing their medical information, including their social security numbers, address and other critical information. Despite the improvements that have come with adopting technology, there is always the possibility that digital technological gadgets might fail. If makers of a given technology do not have a sustainable business process or a good track record, their technologies might fail. Many people, including patients and doctors who solely rely on technology, might be affected when it does. Apart from equipment failure, technology has created the space for laziness within hospitals. Doctors and patients heavily rely on medical technology for problem-solving. In like manner, medical technologies that use machine learning have removed decision-making in different hospitals; today, medical tools are solving people’s problems. Technology has been great for our hospitals, but the speed at which different hospitals are adapting to technological processes is alarming. Technology often fails, and when it does, health care may be significantly affected. Doctors and patients who use technology may be forced to go back to traditional methods of health care services. Bonato, P. “Advances in Wearable Technology and Its Medical Applications.” 2010 Annual International Conference of The IEEE Engineering in Medicine and Biology , 2010, pp. 33-45. Chen, Min et al. “Disease Prediction by Machine Learning Over Big Data from Healthcare Communities.” IEEE Access , vol. 5, 2017, pp. 69-79. De Belvis, Antonio Giulio et al. “The Financial Crisis in Italy: Implications for The Healthcare Sector.” Health Policy , vol. 106, no. 1, 2012, pp. 10-16. Free, Caroline et al. “The Effectiveness of M-Health Technologies for Improving Health and Health Services: A Systematic Review Protocol.” BMC Research Notes , vol. 3, no. 1, 2010, pp. 42-78. Mettler, Matthias. “Blockchain Technology in Healthcare: The Revolution Starts Here.” 2016 IEEE 18Th International Conference On E-Health Networking, Applications and Services (Healthcom) , 2016, pp. 23-78. Cite this pageSimilar essay samples.

For Better or Worse, Technology Is Taking Over the Health WorldFor many people over the past year and a half, the world has existed primarily through a screen. With social distancing measures in place to protect individuals from becoming infected with the coronavirus, technology has stepped in to fill the void of physical connections. It’s also become a space for navigating existing and new mental health conditions through virtual therapy sessions, meditation apps, mental health influencers, and beyond. “Over the years, mental health and technology have started touching each other more and more, and the pandemic accelerated that in an unprecedented way,” says Naomi Torres-Mackie, PhD , the head of research at The Mental Health Coalition , a clinical psychologist at Lenox Hill Hospital, and an adjunct professor at Columbia University. “This is especially the case because the pandemic has highlighted the importance of mental health for everyone as we struggle to make sense of an overwhelming new world and can find mental health information and services online.” This shift is especially critical, with a tremendous spike occurring in mental health conditions. In the period between January and June 2019, 11% of US adults reported experiencing symptoms of an anxiety or depressive disorder. In January 2021, 10 months into the pandemic, in one survey that number increased to 41.1%. Research also points to a potential connection for some between having COVID-19 and developing a mental health condition—whether or not you previously had one. The pandemic’s bridge between mental health and technology has helped to “meet the needs of many suffering from depression, anxiety, life transition, grief, family conflict, and addiction,” says Miyume McKinley, MSW, LCSW , a psychotherapist and founder of Epiphany Counseling, Consulting & Treatment Services. Naomi Torres-Mackie, PhDThe risk of greater access is that the floodgates are open for anyone to say anything about mental health, and there’s no vetting process or way to truly check credibility. This increased reliance on technology to facilitate mental health care and support appears to be a permanent one. Torres-Mackie has witnessed mental health clinicians drop their apprehension around virtual services throughout the pandemic and believes they will continue for good. “Almost all therapists seem to be at least offering virtual sessions, and a good portion have transitioned their practices to be entirely virtual, giving up their traditional in-person offices,” adds Carrie Torn, MSW, LCSW , a licensed clinical social worker and psychotherapist in private practice in Charlotte, North Carolina. The general public is also more receptive to technology’s expanded role in mental health care. “The pandemic has created a lasting relationship between technology, and it has helped increase access to mental health services across the world,” says McKinley. “There are lots of people seeking help who would not have done so prior to the pandemic, either due to the discomfort or because they simply didn’t know it was possible to obtain such services via technology.” Accessibility Is a Tremendous Benefit of TechnologyEvery expert interviewed agreed: Accessibility is an undeniable and indispensable benefit of mental health’s increasing presence online. Torn points out, “We can access information, including mental health information and treatment like never before, and it’s low cost.” A 2018 study found that, at the time, 74% of Americans didn’t view mental health as accessible to everyone. Participants cited long wait times, a lack of affordable options, low awareness, and social stigma as barriers to mental health care. The evolution of mental health and technology has alleviated some of these issues—whether it be through influencers creating open discussions around mental health and normalizing it or low-cost therapy apps . In addition, wait times may reduce when people are no longer tied to seeing a therapist in their immediate area. While some people may still be apprehensive about trying digital therapy, research has shown that it is an effective strategy for managing your mental health. A 2020 review of 17 studies published in EClinicalMedicine found that online cognitive-behavioral therapy sessions were at least as effective at reducing the severity of depression symptoms than in-person sessions. There wasn’t a significant difference in participant satisfaction between the two options. There Are Limitations to Mental Health and Technology’s Increasing ClosenessOne of the most prevalent limitations of technology-fueled mental health care and awareness is the possibility of misleading or inaccurate information. If you’re attending digital sessions with a therapist, it’s easy to check their qualifications and reviews. However, for most other online mental health resources, it can be more challenging but remains just as critical to verify their expertise and benefits. “The risk of greater access is that the floodgates are open for anyone to say anything about mental health, and there’s no vetting process or way to truly check credibility,” says Torres-Mackle. To that point, James Giordano, PhD, MPhil , professor of neurology and ethics at Georgetown University Medical Center and author of the book “Neurotechnology: Premises, Potential, and Problems,” cautions that, while there are guiding institutions, the market still contains “unregulated products, resources, and services, many of which are available via the internet. Thus, it’s very important to engage due diligence when considering the use of any mental health technology .” Verywell / Alison Czinkota McKinley raises another valuable point: A person’s home is not always a space they can securely explore their mental health. “For many individuals, home is not a safe place due to abuse, addiction, toxic family, or unhealthy living environments,” she says. “Despite technology offering a means of support, if the home is not a safe place, many people won’t seek the help or mental health treatment that they need. For some, the therapy office is the only safe place they have.” Due to the pandemic and a general limit on private places outside of the home to dive into your personal feelings, someone in this situation may struggle to find opportunities for help. Miyume McKinley, MSW, LCSWThere are lots of people seeking help that would not have done so prior to the pandemic, either due to the discomfort or because they simply didn’t know it was possible to obtain such services via technology. Torn explains that therapists who work for tech platforms can also suffer due to burnout and low pay. She claims that some of these platforms prioritize seeing new clients instead of providing time for existing clients to grow their relationship. “I’ve heard about clients having to jump from one therapist to the next, or therapists who can’t even leave stops open for their existing clients, and instead their schedule gets filled with new clients,” she says. “Therapists are burning out in general right now, and especially on these platforms, which leads to a lower quality of care for clients.” Screen Time Can Also Have a Negative ImpactAs mental health care continues to spread into online platforms, clinicians and individuals must contend with society’s growing addiction to tech and extended screen time’s negative aspects. Social media, in particular, has been shown to impact an individual’s mental health negatively. A 2019 study looked at how social media affected feelings of social isolation in 1,178 students aged 18 to 30. While having a positive experience on social media didn’t improve it, each 10% increase in negative experiences elevated social isolation feelings by 13%. Verywell / Alison Czinkota While certain aspects like Zoom therapy and mental health influencers require looking at a screen, you can use other digital options such as meditation apps without constantly staring at your device. What to Be Mindful of as You Explore Mental Health Within TechnologyNothing is all bad or all good and that stands true for mental health’s increased presence within technology. What’s critical is being aware that “technology is a tool, and just like any tool, its impact depends on how it's used,” says Torres-Mackie. For example, technology can produce positive results if you use the digital space to access treatment that you may have struggled to otherwise, support your mental well-being, or gather helpful—and credible—information about mental health. In contrast, she explains that diving into social media or other avenues only to compare yourself with others and avoid your responsibilities can have negative repercussions on your mental health and relationships. Giordano expresses the importance of staying vigilant about your relationship with and reliance on tech and your power to control it. With that in mind, pay attention to how much time you spend online. “We are spending less time outside, and more time glued to our screens. People are constantly comparing their lives to someone else's on social media, making it harder to be present in the moment and actually live our lives,” says Torn. Between the increase in necessary services moving online and trying to connect with people through a screen, it’s critical to take time away from your devices. According to a 2018 study, changing your social media habits, in particular, can improve your overall well-being . Participants limited Instagram, Facebook, and Snapchat use to 10 minutes a day per platform for three weeks. At the end of the study, they showed significant reductions in depression and loneliness compared to the control group. However, even the increased awareness of their social media use appeared to help the control group lower feelings of anxiety and fear of missing out. “Remember, it’s okay to turn your phone off. It’s okay to turn notifications off for news, apps, and emails,” says McKinley. Take opportunities to step outside, spend time with loved ones, and explore screen-free self-care activities. She adds, “Most of the things in life that make life worthwhile cannot be found on our devices, apps, or through technology—it’s found within ourselves and each other.” Kaiser Family Foundation. The implications of COVID-19 for mental health and substance use . Taquet M, Luciano S, Geddes JR, Harrison PJ. Bidirectional associations between COVID-19 and psychiatric disorder: retrospective cohort studies of 62 354 COVID-19 cases in the USA . Lancet Psychiatry . 2021;8(2):130-140. doi:10.1016/S2215-0366(20)30462-4 Luo C, Sanger N, Singhal N, et al. A comparison of electronically-delivered and face to face cognitive behavioural therapies in depressive disorders: a systematic review and meta-analysis . EClinicalMedicine . 2020;24:100442. doi:10.1016/j.eclinm.2020.100442 Primack BA, Karim SA, Shensa A, Bowman N, Knight J, Sidani JE. Positive and negative experiences on social media and perceived social isolation . Am J Health Promot . 2019;33(6):859-868. doi:10.1177/0890117118824196 Hunt MG, Marx R, Lipson C, Young J. No more FOMO: Limiting social media decreases loneliness and depression . J Soc Clin Psychol . 2018;37(10):751-768. doi:10.1521/jscp.2018.37.10.751 Impact of Technology on Health & Wellness

Discover the world's research

45,000+ students realised their study abroad dream with us. Take the first step todayMeet top uk universities from the comfort of your home, here’s your new year gift, one app for all your, study abroad needs, start your journey, track your progress, grow with the community and so much more.  Verification Code An OTP has been sent to your registered mobile no. Please verify  Thanks for your comment ! Our team will review it before it's shown to our readers.

Speech on Impact of Technology on Our Health

Technology has covered all our aspects of life. Technology has completely changed our lives from our alarm clocks in the morning to evening TV shows or internet surfacing. We all have access to electronic devices and gadgets, which we use for our day-to-day needs. Do you want to talk to someone? You have a cell phone or a social media account. Although technology has improved our lives in several ways, there are certain health issues related to it. One of the classic examples is the emission of blue light from our smartphones or laptops, which causes eyestrain and headaches. Below, we have highlighted a speech on impact of technology on our health for students. Also Read: 160+ Best and Easy English Speech Topics for Students Also Read: Speech on Beauty Is In The Eye of The Beholder 2-Minute Speech on Impact of Technology on Our Health‘Hello and welcome to everyone present here. I would like to thank you all for giving me this wonderful opportunity to present myself in a speech on Impact of Technology on Our Health.’ ‘Health is something we all care about. Remember that quote; Health is wealth. Excessive use of technological devices results in negative impacts on our mental, psychological, social, and physical health.’ ‘Some of the common impacts of technology on our physical health are obesity and related conditions. We youngsters spend long hours in front of our computer screens or smartphones, which results in pain in our eyes, known as eyestrain. Technology has given us easy access to any and every information of the world, be it past, present, or sometimes future. Because of this, we remain seated for long hours on a single chair, bed, or sofa, which affects our physical health.’ ‘Another impact of technology on our health is its influence on our mental well-being. With benefits like unprecedented connectivity and access to information, new challenges have also emerged. How many times in a day your your cell phone buzz due to notifications? How many times do you check the time on your phone? Constantly checking your cell phone desktop will increase mental stress, and anxiety and it may even lead to depression.’ ‘How can we neglect the threat of AI on employment and job security? No doubt AI and automation has led to some outstanding developments in various fields, but the fear of job displacement can a devastating consequence. Fear of losing your job may lead to mental disorders, and might contribute to societal well-being.’ ‘We must use technology to gain information and socialize with people. Staying long hours online, playing video games, unnecessary internet surfing, and various other activities will lead to unhealthy habits. We need to recognize the potential risks associated with the use of technology and adopt a balanced lifestyle. Thank you.’ Also Read: How to Tackle Bad Habits Speech Also Read: Speech on Social Media Bane or Boon 10 Lines on the Impact of TechnologyHere are 10 lines on the impact of technology. Feel free to add them to your speech or any writing topics related to technology.

Also Read: How to Prepare for UPSC in 6 Months? Ans: Technology has greatly improved our lives in several ways. It has allowed us to access any and every piece of information around the world in real-time, we can build and create big skyscrapers, travel to faraway places within hours, and even travel to outer space. Technology has transformed our traditional way of living into today’s modern world. Our education and health systems, law and order, and even governance heavily rely on technology for smooth functioning. Ans: Technology has covered all aspects of our lives. Today, almost every industry and sector relies on technology for its smooth functioning. What we once considered impossible is now achievable because of technology. However, relying too much on something makes us vulnerable, and so is the case with technology. There are impacts of technology on our health, such as physical, mental, psychological, and social. Staring for long on a computer or mobile screen causes eyestrain and body pain, keeping ourselves busy on social media isolates us from the real world and what we see on the internet is what we expect, which is not the case in real life. When our expectations are not met, it leads to psychological problems like mental stress and depression. Ans: ‘Excessive use of electronic devices causes physical, psychological, and social problems.’ ‘Technology is the use of scientific knowledge for our well-being.’ ‘Technology is a good servant but a bad master.’ ‘Technology has allowed us to accomplish what was once impossible.’ ‘Portable gadgets like mobile phones and laptops have made information accessible to everyone.’ Related Articles For more information on such interesting speech topics for your school, visit our speech writing page and follow Leverage Edu . Shiva TyagiWith an experience of over a year, I've developed a passion for writing blogs on wide range of topics. I am mostly inspired from topics related to social and environmental fields, where you come up with a positive outcome. Leave a Reply Cancel replySave my name, email, and website in this browser for the next time I comment. Contact no. * Superb good for kids  Connect With Us45,000+ students realised their study abroad dream with us. take the first step today..  Resend OTP in  Need help with?Study abroad. UK, Canada, US & More IELTS, GRE, GMAT & More Scholarship, Loans & Forex Country PreferenceNew Zealand  Which English test are you planning to take?Which academic test are you planning to take. Not Sure yet When are you planning to take the exam?Already booked my exam slot Within 2 Months Want to learn about the test Which Degree do you wish to pursue?When do you want to start studying abroad. January 2024 September 2024 What is your budget to study abroad? How would you describe this article ? Please rate this article We would like to hear more. Have something on your mind?  Make your study abroad dream a reality in January 2022 withIndia's Biggest Virtual University Fair  Essex Direct Admission DayWhy attend .  Don't Miss Out How Technology Affects Our Lives – Essay

Do you wish to explore the use of information technology in daily life? Essays like the one below discuss this topic in depth. Read on to find out more. IntroductionTechnology in communication, technology in healthcare, technology in government, technology in education, technology in business, negative impact of technology. Technology is a vital component of life in the modern world. People are so dependent on technology that they cannot live without it. Technology is important and useful in all areas of human life today. It has made life easy and comfortable by making communication and transport faster and easier (Harrington, 2011, p.35). It has made education accessible to all and has improved healthcare services. Technology has made the world smaller and a better place to live. Without technology, fulfilling human needs would be a difficult task. Before the advent of technology, human beings were still fulfilling their needs. However, with technology, fulfillment of needs has become easier and faster. It is unimaginable how life would be without technology. Technology is useful in the following areas: transport, communication, interaction, education, healthcare, and business (Harrington, 2011, p.35). Despite its benefits, technology has negative impacts on society. Examples of negative impacts of technology include the development of controversial medical practices such as stem cell research and the embracement of solitude due to changes in interaction methods. For example, social media has changed the way people interact. Technology has led to the introduction of cloning, which is highly controversial because of its ethical and moral implications. The growth of technology has changed the world significantly and has influenced life in a great way. Technology is changing every day and continuing to influence areas of communication, healthcare, governance, education, and business. Technology has contributed fundamentally in improving people’s lifestyles. It has improved communication by incorporating the Internet and devices such as mobile phones into people’s lives. The first technological invention to have an impact on communication was the discovery of the telephone by Graham Bell in 1875. Since then, other inventions such as the Internet and the mobile phone have made communication faster and easier. For example, the Internet has improved ways through which people exchange views, opinions, and ideas through online discussions (Harrington, 2011, p.38). Unlike in the past when people who were in different geographical regions could not easily communicate, technology has eradicated that communication barrier. People in different geographical regions can send and receive messages within seconds. Online discussions have made it easy for people to keep in touch. In addition, they have made socializing easy. Through online discussions, people find better solutions to problems by exchanging opinions and ideas (Harrington, 2011, p.39). Examples of technological inventions that facilitate online discussions include emails, online forums, dating websites, and social media sites. Another technological invention that changed communication was the mobile phone. In the past, people relied on letters to send messages to people who were far away. Mobile phones have made communication efficient and reliable. They facilitate both local and international communication. In addition, they enable people to respond to emergencies and other situations that require quick responses. Other uses of cell phones include the transfer of data through applications such as infrared and Bluetooth, entertainment, and their use as miniature personal computers (Harrington, 2011, p.40). The latest versions of mobile phones are fitted with applications that enable them to access the Internet. This provides loads of information in diverse fields for mobile phone users. For business owners, mobile phones enhance the efficiency of their business operations because they are able to keep in touch with their employees and suppliers (Harrington, 2011, p.41). In addition, they are able to receive any information about the progress of their business in a short period of time. Technology has contributed significantly to the healthcare sector. For example, it has made vital contributions in the fields of disease prevention and health promotion. Technology has aided in the understanding of the pathophysiology of diseases, which has led to the prevention of many diseases. For example, understanding the pathophysiology of the gastrointestinal tract and blood diseases has aided in their effective management (Harrington, 2011, p.49). Technology has enabled practitioners in the medical field to make discoveries that have changed the healthcare sector. These include the discovery that peptic ulceration is caused by a bacterial infection and the development of drugs to treat schizophrenia and depressive disorders that afflict a greater portion of the population (Harrington, 2011, p.53). The development of vaccines against polio and measles led to their total eradication. Children who are vaccinated against these diseases are not at risk of contracting the diseases. The development of vaccines was facilitated by technology, without which certain diseases would still be causing deaths in great numbers. Vaccines play a significant role in disease prevention. Technology is used in health promotion in different ways. First, health practitioners use various technological methods to improve health care. eHealth refers to the use of information technology to improve healthcare by providing information on the Internet to people. In this field, technology is used in three main ways. These include its use as an intervention tool, its use in conducting research studies, and its use for professional development (Lintonen et al, 2008, p. 560). According to Lintonenet al (2008), “e-health is the use of emerging information and communications technology, especially the internet, to improve or enable health and healthcare.” (p.560). It is largely used to support health care interventions that are mainly directed towards individual persons. Secondly, it is used to improve the well-being of patients during recovery. Bedside technology has contributed significantly in helping patients recover. For example, medical professionals have started using the Xbox computer technology to develop a revolutionary process that measures limb movements in stroke patients (Tanja-Dijkstra, 2011, p.48). This helps them recover their manual competencies. The main aim of this technology is to help stroke patients do more exercises to increase their recovery rate and reduce the frequency of visits to the hospital (Lintonen et al, 2008, p. 560). The government has utilized technology in two main areas. These include the facilitation of the delivery of citizen services and the improvement of defense and national security (Scholl, 2010, p.62). The government is spending large sums of money on wireless technologies, mobile gadgets, and technological applications. This is in an effort to improve their operations and ensure that the needs of citizens are fulfilled. For example, in order to enhance safety and improve service delivery, Cisco developed a networking approach known as Connected Communities. This networking system connects citizens with the government and the community. The system was developed to improve the safety and security of citizens, improve service delivery by the government, empower citizens, and encourage economic development. The government uses technology to provide information and services to citizens. This encourages economic development and fosters social inclusion (Scholl, 2010, p.62). Technology is also useful in improving national security and the safety of citizens. It integrates several wireless technologies and applications that make it easy for security agencies to access and share important information effectively. Technology is widely used by security agencies to reduce vulnerability to terrorism. Technologically advanced gadgets are used in airports, hospitals, shopping malls, and public buildings to screen people for explosives and potentially dangerous materials or gadgets that may compromise the safety of citizens (Bonvillian and Sharp, 2001, par2). In addition, security agencies use surveillance systems to restrict access to certain areas. They also use technologically advanced screening and tracking methods to improve security in places that are prone to terrorist attacks (Bonvillian and Sharp, 2001, par3). Technology has made significant contributions in the education sector. It is used to enhance teaching and learning through the use of different technological methods and resources. These include classrooms with digital tools such as computers that facilitate learning, online learning schools, blended learning, and a wide variety of online learning resources (Barnett, 1997, p.74). Digital learning tools that are used in classrooms facilitate learning in different ways. They expand the scope of learning materials and experiences for students, improve student participation in learning, make learning easier and quick, and reduce the cost of education (Barnett, 1997, p.75). For example, online schools and free learning materials reduce the costs that are incurred in purchasing learning materials. They are readily available online. In addition, they reduce the expenses that are incurred in program delivery. Technology has improved the process of teaching by introducing new methods that facilitate connected teaching. These methods virtually connect teachers to their students. Teachers are able to provide learning materials and the course content to students effectively. In addition, teachers are able to give students an opportunity to personalize learning and access all learning materials that they provide. Technology enables teachers to serve the academic needs of different students. In addition, it enhances learning because the problem of distance is eradicated, and students can contact their teachers easily (Barnett, 1997, p.76). Technology plays a significant role in changing how teachers teach. It enables educators to evaluate the learning abilities of different students in order to devise teaching methods that are most efficient in the achievement of learning objectives. Through technology, teachers are able to relate well with their students, and they are able to help and guide them. Educators assume the role of coaches, advisors, and experts in their areas of teaching. Technology helps make teaching and learning enjoyable and gives it meaning that goes beyond the traditional classroom set-up system (Barnett, 1997, p.81). Technology is used in the business world to improve efficiency and increase productivity. Most important, technology is used as a tool to foster innovation and creativity (Ray, 2004, p.62). Other benefits of technology to businesses include the reduction of injury risk to employees and improved competitiveness in the markets. For example, many manufacturing businesses use automated systems instead of manual systems. These systems eliminate the costs of hiring employees to oversee manufacturing processes. They also increase productivity and improve the accuracy of the processes because of the reduction of errors (Ray, 2004, p.63). Technology improves productivity due to Computer-aided Manufacturing (CAM), Computer-integrated Manufacturing (CIM), and Computer-aided Design (CAD). CAM reduces labor costs, increases the speed of production, and ensures a higher level of accuracy (Hunt, 2008, p.44). CIM reduces labor costs, while CAD improves the quality and standards of products and reduces the cost of production. Another example of the use of technology in improving productivity and output is the use of database systems to store data and information. Many businesses store their data and other information in database systems that make accessibility of information fast, easy, and reliable (Pages, 2010, p.44). Technology has changed how international business is conducted. With the advent of e-commerce, businesses became able to trade through the Internet on the international market (Ray, 2004, p.69). This means that there is a large market for products and services. In addition, it implies that most markets are open 24 hours a day. For example, customers can shop for books or music on Amazon.com at any time of the day. E-commerce has given businesses the opportunity to expand and operate internationally. Countries such as China and Brazil are taking advantage of opportunities presented by technology to grow their economy. E-commerce reduces the complexities involved in conducting international trade (Ray, 2004, p.71). Its many components make international trade easy and fast. For example, a BOES system allows merchants to execute trade transactions in any language or currency, monitor all steps involved in transactions, and calculate all costs involved, such as taxes and freight costs (Yates, 2006, p.426). Financial researchers claim that a BOES system is capable of reducing the cost of an international transaction by approximately 30% (Ray, 2004, p.74). BOES enables businesses to import and export different products through the Internet. This system of trade is efficient and creates a fair environment in which small and medium-sized companies can compete with large companies that dominate the market. Despite its many benefits, technology has negative impacts. It has negative impacts on society because it affects communication and has changed the way people view social life. First, people have become more anti-social because of changes in methods of socializing (Harrington, 2008, p.103). Today, one does not need to interact physically with another person in order to establish a relationship. The Internet is awash with dating sites that are full of people looking for partners and friends. The ease of forming friendships and relationships through the Internet has discouraged many people from engaging in traditional socializing activities. Secondly, technology has affected the economic statuses of many families because of high rates of unemployment. People lose jobs when organizations and businesses embrace technology (Harrington, 2008, p.105). For example, many employees lose their jobs when manufacturing companies replace them with automated machines that are more efficient and cost-effective. Many families are struggling because of the lack of a constant stream of income. On the other hand, technology has led to the closure of certain companies because the world does not need their services. This is prompted by technological advancements. For example, the invention of digital cameras forced Kodak to close down because people no longer needed analog cameras. Digital cameras replaced analog cameras because they are easy to use and efficient. Many people lost their jobs due to changes in technology. Thirdly, technology has made people lazy and unwilling to engage in strenuous activities (Harrington, 2008, p.113). For example, video games have replaced physical activities that are vital in improving the health of young people. Children spend a lot of time watching television and playing video games such that they have little or no time for physical activities. This has encouraged the proliferation of unhealthy eating habits that lead to conditions such as diabetes. Technology has elicited heated debates in the healthcare sector. Technology has led to medical practices such as stem cell research, implant embryos, and assisted reproduction. Even though these practices have been proven viable, they are highly criticized on the grounds of their moral implications on society. There are many controversial medical technologies, such as gene therapy, pharmacogenomics, and stem cell research (Hunt, 2008, p.113). The use of genetic research in finding new cures for diseases is imperative and laudable. However, the medical implications of these disease treatment methods and the ethical and moral issues associated with the treatment methods are critical. Gene therapy is mostly rejected by religious people. They claim that it is against natural law to alter the gene composition of a person in any way (Hunt, 2008, p.114). The use of embryonic stem cells in research is highly controversial, unlike the use of adult stem cells. The controversy exists because of the source of the stem cells. The cells are obtained from embryos. There is a belief among many people that life starts after conception. Therefore, using embryos in research means killing them to obtain their cells for research. The use of embryo cells in research is considered in the same light as abortion: eliminating a life (Hunt, 2008, p.119). These issues have led to disagreements between the science and the religious worlds. Technology is a vital component of life in the modern world. People are so dependent on technology that they cannot live without it. Technology is important and useful in all areas of human life today. It has made life easy and comfortable by making communication faster and travel faster, making movements between places easier, making actions quick, and easing interactions. Technology is useful in the following areas of life: transport, communication, interaction, education, healthcare, and business. Despite its benefits, technology has negative impacts on society. Technology has eased communication and transport. The discovery of the telephone and the later invention of the mobile phone changed the face of communication entirely. People in different geographical regions can communicate easily and in record time. In the field of health care, technology has made significant contributions in disease prevention and health promotion. The development of vaccines has eradicated certain diseases, and the use of the Internet is vital in promoting health and health care. The government uses technology to enhance the delivery of services to citizens and the improvement of defense and security. In the education sector, teaching and learning processes have undergone significant changes owing to the impact of technology. Teachers are able to relate to different types of learners, and the learners have access to various resources and learning materials. Businesses benefit from technology through the reduction of costs and increased efficiency of business operations. Despite the benefits, technology has certain disadvantages. It has negatively affected human interactions and socialization and has led to widespread unemployment. In addition, its application in the healthcare sector has elicited controversies due to certain medical practices such as stem cell research and gene therapy. Technology is very important and has made life easier and more comfortable than it was in the past. Barnett, L. (1997). Using Technology in Teaching and Learning . New York: Routledge. Bonvillian, W., and Sharp, K. (2011). Homeland Security Technology . Retrieved from https://issues.org/bonvillian/ . Harrington, J. (2011). Technology and Society . New York: Jones & Bartlett Publishers. Hunt, S. (2008). Controversies in Treatment Approaches: Gene Therapy, IVF, Stem Cells and Pharmagenomics. Nature Education , 19(1), 112-134. Lintonen, P., Konu, A., and Seedhouse, D. (2008). Information Technology in Health Promotion. Health Education Research , 23(3), 560-566. Pages, J., Bikifalvi, A., and De Castro Vila, R. (2010). The Use and Impact of Technology in Factory Environments: Evidence from a Survey of Manufacturing Industry in Spain. International Journal of Advanced Manufacturing Technology , 47(1), 182-190. Ray, R. (2004). Technology Solutions for Growing Businesses . New York: AMACOM Div American Management Association. Scholl, H. (2010). E-government: Information, Technology and Transformation . New York: M.E. Sharpe. Tanja-Dijkstra, K. (2011). The Impact of Bedside Technology on Patients’ Well-Being. Health Environments Research & Design Journal (HERD) , 5(1), 43-51. Yates, J. (2006). How Business Enterprises use Technology: Extending the Demand-Side Turn. Enterprise and Society , 7(3), 422-425.

IvyPanda. (2018, July 2). How Technology Affects Our Lives – Essay. https://ivypanda.com/essays/technology-affecting-our-daily-life/ "How Technology Affects Our Lives – Essay." IvyPanda , 2 July 2018, ivypanda.com/essays/technology-affecting-our-daily-life/. IvyPanda . (2018) 'How Technology Affects Our Lives – Essay'. 2 July. IvyPanda . 2018. "How Technology Affects Our Lives – Essay." July 2, 2018. https://ivypanda.com/essays/technology-affecting-our-daily-life/. 1. IvyPanda . "How Technology Affects Our Lives – Essay." July 2, 2018. https://ivypanda.com/essays/technology-affecting-our-daily-life/. Bibliography IvyPanda . "How Technology Affects Our Lives – Essay." July 2, 2018. https://ivypanda.com/essays/technology-affecting-our-daily-life/. Essays on the impact of health information technology on health care providers and patientsNew citation alert added. This alert has been successfully added and will be sent to: You will be notified whenever a record that you have chosen has been cited. To manage your alert preferences, click on the button below. New Citation Alert!Please log in to your account Information & ContributorsBibliometrics & citations, view options, recommendations, obesity in health care: perceptions of health care providers and patients at an academic medical center, essays in health economics, investigating primary health care nurses' intention to use information technology: an empirical study in taiwan. Information technology (IT) has become a significant part of providing consistent care quality. The applications of the primary health information system (PHIS) in primary health care have rapidly influenced the care service delivery. Improved ... InformationPublished in. State University of New York at Buffalo United States Publication HistoryContributors, other metrics, bibliometrics, article metrics.

View optionsLogin options. Check if you have access through your login credentials or your institution to get full access on this article. Full AccessShare this publication link. Copying failed. Share on social mediaAffiliations, export citations.

Download citation

We are preparing your search results for download ... We will inform you here when the file is ready. Your file of search results citations is now ready. Your search export query has expired. Please try again.  Essay on Negative Effects Of Technology On HealthStudents are often asked to write an essay on Negative Effects Of Technology On Health in their schools and colleges. And if you’re also looking for the same, we have created 100-word, 250-word, and 500-word essays on the topic. Let’s take a look… 100 Words Essay on Negative Effects Of Technology On HealthToo much screen time. When we stare at screens like phones and computers for a long time, our eyes can get tired and sore. This is called eye strain. Kids might also find it hard to sleep if they use screens before bed because the light from the screen tricks their brains into thinking it’s still daytime. Poor Posture and PainLess physical activity. Playing games or watching videos on a device can be fun, but it means we move less. Running, jumping, and playing outside keep our bodies healthy. If we spend too much time with technology, we might not get enough exercise, which is important for a strong heart and muscles. Unhealthy Weight GainNot moving much and snacking while using devices can lead to weight gain. Eating without thinking when we are looking at a screen can make us eat more than our body needs. This can make it hard to stay at a healthy weight. Mental Health Issues250 words essay on negative effects of technology on health. When we use our phones, computers, or tablets for a long time, it can be bad for our eyes. Staring at screens can make our eyes tired and can cause headaches. This is because our eyes have to work hard to look at the bright light and small text on screens. It is important to take breaks and look at things far away to give our eyes a rest. Technology can also make it hard for us to sleep well. The blue light from screens can trick our brains into thinking it’s still daytime, making it tough to fall asleep. Not getting enough sleep can make us feel tired and grumpy the next day. It’s good to stop using screens at least an hour before bed to help our brains get ready for sleep. Not Enough ExercisePlaying outside or doing sports is great for our health. But sometimes, we might choose to play video games or watch TV instead. This means we sit still for too long and don’t move our bodies enough. Moving around is important to keep our muscles and bones strong and to stay fit. Eating UnhealthySometimes, when we are watching something interesting, we might eat snacks without thinking. This can lead to eating too much junk food, which is not good for our bodies. It’s better to eat snacks at a table without screens so we can pay attention to how much we are eating. Mental HealthLastly, using technology a lot can make us feel lonely or sad, especially if we spend more time online than with real people. Talking and playing with friends and family is very important for our happiness. We should try to balance the time we spend with technology and with others. 500 Words Essay on Negative Effects Of Technology On HealthPhysical problems from too much screen time, too little sleep. Technology can also mess with our sleep, which is super important for our health. Bright screens can trick our brains into thinking it’s still daytime, so we don’t feel sleepy. If we use our devices at night, it can be harder to fall asleep and stay asleep. Not getting enough sleep can make us feel grumpy, have trouble thinking, and weaken our immune system, which helps us fight off sickness. Mental Health StrugglesOur minds can be hurt by technology, too. Social media can make us feel bad about ourselves if we think everyone else’s life looks perfect and ours doesn’t. It can also make us feel lonely if we’re scrolling instead of talking to people face-to-face. Sometimes, the things we see online can make us scared or sad, and those feelings can stick with us even after we turn off our devices. Less Exercise and Outdoor TimeBad habits and safety risks. Technology is a big part of our lives, and it can be really helpful. But it’s important to remember that using it too much can be bad for our health. We should try to take breaks from screens, get plenty of sleep, spend time with friends and family in person, and play outside. By finding a good balance, we can enjoy technology without letting it hurt our health. Apart from these, you can look at all the essays by clicking here . Happy studying!  2 Minute Speech On The Impact Of Technology On Our Health In EnglishGood morning everyone present here, today I am going to give a speech on the impact of technology on our health. There is evidence indicating both the detrimental impacts of technology and its excessive use, even though some forms of technology may have improved the world. Social media and mobile gadgets may cause psychological problems as well as physical problems including eyestrain and trouble focusing on crucial tasks. Additionally, they might exacerbate more severe medical issues like depression. Children and teenagers who are still developing may be more negatively impacted by excessive usage of technology. The opposite was also accurate, though. Higher levels of despair and anxiety were observed in people who believed they engaged in more unfavorable social interactions online and who were more likely to engage in social comparison. A person can pay attention to technologies like mobile phones, computers, and handheld tablets for extended periods of time. Eye fatigue may result from this. Related Posts:An official website of the United States government The .gov means it’s official. Federal government websites often end in .gov or .mil. Before sharing sensitive information, make sure you’re on a federal government site. The site is secure. The https:// ensures that you are connecting to the official website and that any information you provide is encrypted and transmitted securely.

Preview improvements coming to the PMC website in October 2024. Learn More or Try it out now .

Language: English | Portuguese Impacts of technology on children’s health: a systematic reviewImpactos da tecnologia na saúde infantil: revisão sistemática, raquel cordeiro ricci. a Universidade Federal de Mato Grosso do Sul, Três Lagoas, MS, Brazil. Aline Souza Costa de PauloAlisson kelvin pereira borges de freitas, isabela crispim ribeiro, leonardo siqueira aprile pires, maria eduarda leite facina, milla bitencourt cabral, natália varreira parduci, rafaela caldato spegiorin, sannye sabrina gonzález bogado, sergio chociay, junior, talita navarro carachesti, mônica mussolini larroque. The authors declare no conflict of interests. Authors’ contribution To identify the consequences of technology overuse in childhood. Data source:A systematic review was carried out in the electronic databases PubMed (National Library of Medicine of the National Institutes of Health) and BVS (Virtual Health Library), considering articles published from 2015 to 2020, in English, Portuguese and Spanish using the terms “Internet”, “Child” and “Growth and Development”. Data synthesis:554 articles were found and 8 were included in the analysis. The studies’ methodological quality was assessed by the Strobe and Consort criteria, being scored from 17 to 22 points. The articles showed positive and negative factors associated with the use of technology in childhood, although most texts emphasize the harmful aspects. Excessive use of internet, games and exposure to television are associated with intellectual deficits and mental health issues, but can also enable psychosocial development. Conclusions:Preventing the use of the internet is a utopic measure ever since society makes use of technologies. The internet is associated with benefits as well as with harms. It is important to optimize the use of internet and reduce risks with the participation of parents and caregivers as moderators, and training of health professionals to better guide them. Identificar as consequências do uso excessivo da tecnologia na infância. Fontes de dados:Foi realizada uma revisão sistemática nas bases de dados eletrônicas PubMed (National Library of Medicine — National Institutes of Health) e Biblioteca Virtual em Saúde (BVS) com artigos publicados de 2015 a 2020, em inglês, português e espanhol, utilizando os termos internet, child e growth and development . Síntese dos dados:Foram localizados 554 artigos, resultando em oito artigos incluídos nesta pesquisa. Os estudos foram avaliados quanto à sua qualidade metodológica pelos critérios Strengthening the Reporting of Observational Studies in Epidemiology (Strobe) e Consolidated Standards of Reporting Trials (Consort) e receberam pontuações que variaram de 17 a 22 pontos. Os artigos evidenciaram que há fatores positivos e negativos associados ao uso de tecnologias na infância, embora a maioria dos textos ressalte seu aspecto prejudicial. O uso excessivo de internet, jogos e exposição à televisão ocasionaram alterações intelectuais e da saúde mental, porém também possibilitaram o desenvolvimento psicossocial. Conclusões:Impedir o uso da internet é uma medida utópica, visto que a sociedade faz uso de tecnologias. Considerando que a internet pode trazer benefícios, mas também malefícios, são importantes a otimização do uso e a redução dos riscos, como a participação dos pais e responsáveis como moderadores dessa utilização, além da atualização dos profissionais da saúde para melhor orientá-los. INTRODUCTIONNowadays, information and communication technologies increasingly make up children’s daily routines. Data from the Brazilian Institute of Geography and Statistics (IBGE) state that, among Brazilian children aged 10 years and over, internet use rose from 69.8% in 2017 to 74.7% in 2018. Exchange of messages, voice and/or video calls and, finally, watching videos, such as series and movies, are the most frequent activities performed requiring internet services. 1 Studies on digital technologies have been carried out in several fields, since the contents of activities on the internet may vary, reflecting the broad range of information available online. From this perspective, much has been questioned about the impacts of information and communication technologies on children’s physical and psychosocial development. In the cognitive sphere, the influence on sleep, memory, reading ability, concentration, the ability to communicate in person are commonly cited, in addition to anxiety symptoms when children are away from their cell phones. 2 , 3 This construction of self-image by means of technological tools results in potentializing a phenomenon of modernity and the emergence of large cities: placing intimacy as the focus of spectacularization. Furthermore, intense consumption of content can cause anxiety, panic and even depression. In the case of children with previous mental health conditions and who require monitoring, these effects can be even more intense. 4 With this in mind, the World Health Organization (WHO) published a series of recommendations to parents regarding the exposure of children of different age groups to digital technologies. Children under the age of 5 should not spend more than 60 minutes a day in passive activities in front of a smartphone, computer or TV screen. Children under 12 months of age should not spend even a minute in front of electronic devices. The goal is for boys and girls up to 5 years old to change electronics for physical activities or practices that involve interactions in the real world, such as reading and listening to stories with caregivers. 5 These guidelines are part of the strategy for awareness on sedentary lifestyle and obesity by the Organization of United Nations (UN). Thus, it is clear that this spectrum of influence can culminate or intensify various pathologies. Therefore, the aim of the study was to identify the positive and negative consequences of technology overuse in childhood. The selection process and the development of this systematic review were based on the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (Prisma) protocol. 6 This review was registered with the International Prospective Registry of Systematic Reviews (Prospero), under number CRD42021248396. The National Library of Medicine — National Institutes of Health (PubMed) and Virtual Health Library (VHL) electronic databases were searched from March to July 2020. The purpose was to systematically analyze original studies addressing information technologies and communication (Internet, social media, etc.) in child development based on a guiding question: what is the impact of information and communication technologies on childrens physical and psychosocial development? The Medical Subject Headings (MeSH) was used to define the search term. Then, an exploratory investigation was carried out with the purpose of identifying keywords within the theme. The terms “internet”, “child” and “growth and development” were used, in English language, along with “AND”, to combine them. Additionally, the bibliographic references of articles selected were checked. For the articles to be included, the following aspects were considered:

Studies carried out with adolescents, adults and the elderly, as well as theses, dissertations, monographs, duplicate studies and case studies were excluded. The search and selection of articles took place at two different times. The articles were selected first by title and abstracts and, then, the full texts were accessed and evaluated. Studies that met the eligibility criteria were fully analyzed by two independent researchers, whose evaluations were then compared to verify common points. In cases of uncertainty about the eligibility of the study, a third evaluator took part. Then, the data was extracted and input in predefined data tables. The methodological quality of observational articles included was assessed according to the initiative Strengthening the Reporting of Observational Studies in Epidemiology (Strobe), based on various evaluation criteria for this type of studies. The maximum score is 22 points, which are distributed over several items: title and/or abstract (one item), introduction (two items), methodology (nine items), results (five items), discussion (four items), and funding (one item). 7 , 8 All observational studies were evaluated, and each item, when present, added up to 1 point; then the sum was scored according to Table 1 .

The methodological quality of the one randomized trial was based on the Consolidated Standards of Reporting Trials (Consort) strategy, which contains a checklist with 25 items, divided into: title and abstract (one item with two sub-items); introduction (one item with two sub-items); methods (five items) and a topic with information about randomization (five items); results (seven items); discussion (three items); and other information, such as registration, protocols and funding (three items). 9 , 10 Each item, if met, equals 1 point, and they were all added up according to the analysis of the papers. The score of methodological quality of this randomized trial is shown in Table 1 . In order to synthesize the description of characteristics as main results and descriptive approach, the following information was extracted from each selected article: name of the main author, year of publication, country where the study was performed, design, sample size, type of technology evaluated, statistical variables, main results, and limitations. Searches on PubMed and VHL using the descriptors “internet”, “child” and “growth and development” retrieved 550 articles. After applying inclusion criteria, 221 studies were selected and, after reading the titles and abstracts, 125 were excluded. 92 articles were read in full and, per the inclusion criteria and a detailed analysis, four studies were selected. Four other articles were included after an additional search in the reference list of primarily selected articles; the studies should have the same inclusion criteria defined in the methodology. Thus, eight articles made up the sample. The flowchart is shown in Figure 1 .  Most studies were epidemiological. Almost all of them were observational (n=7), and only one was an intervention study. The observational studies included were longitudinal and/or cross-sectional (n=5), case-control (n=1) and cohort studies (n=1). Only one experimental study was included, a randomized controlled trial (n=1), as shown in Table 1 . Their methodological quality was based on their scores ( Table 1 ). Most studies were observational (n=7) and, therefore, were evaluated according to the Strobe criteria 7 . The score ranged from 17 to 22, and most articles reached 20 points (n=4), which is good methodological quality. The quality of the randomized trial with 18 points—according to the Consort 2010 criterion, which has a maximum score of 25—was also considered good. 9 The main results about the implications of technology in childhood are detailed in Tables 2 and and3 3 .